Last year I found out I got completely deindexed by Google. My blog is still not indexed, despite all the work I put in. So I decided to write a follow-up to How I Got Deindexed by Google where I analysed what was wrong with my blog. Less surprisingly, there was more to fix. Those silent bugs in the blog’s structure and markup were present since 2022, when I migrated my blog to jbake.

Still Stuck: The "Crawled – Currently Not Indexed" Purgatory

By now I have hit a frustrating index of just one page.

Sadly, Google Search Console does not give more information on why it doesn’t index my blog. All pages are in the »Crawled – currently not indexed« category, so I at least know that Google can reach those pages. This is different from simple "not crawled" – the Google bot DID see my blog, but then just walked away without indexing it.

The DuckDuckGo Paradox

Maybe you have noticed I have an on-page search which redirects to duckduckgo.com: Interestingly, duckduckgo.com still has all my pages indexed.

This is actually a strong signal towards the fact that the content is still good and valid, but the JSON-LD and microdata markups are not. Google relies much more on these tags and attributes than other search engines, like Bing and DuckDuckGo do.

So while DuckDuckGo rates the content, Google also rates the authority signals: They were either contradictory or just wrong / broken. While nothing on my blog changed, Google changed. My best guess: it was trying to source content for its Knowledge Graph.

Yes, all of this is invisible to a regular reader, but still very relevant to being included in search engine results.

Poking Under the Hood: The Deep Dive

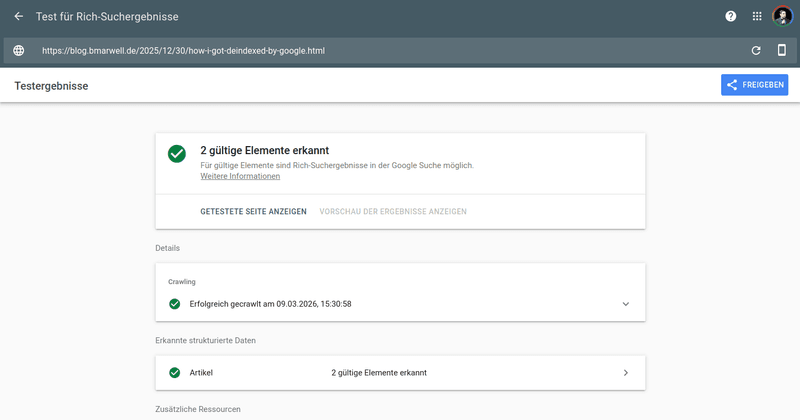

I figured there must still be something wrong with the data on my page. The content was fine — as the section above shows — so I went one layer deeper: Metadata. There is a particular way I include this in my FreeMarker templates. I looked at the Rich Results Test and Schema Markup Validator and found some bugs I could squash.

The Missing Slash: A URL That Pointed Nowhere

First of all, the $https://blog.bmarwell.de variable and the ${content.uri} variables were missing a slash in between. This led to wrong and invalid URLs like https://blog.bmarwell.de/2025/12/30/how-i-got-deindexed-by-google.html. Since Google’s data parser likely treats JSON-LD data like compiled code (use everything or nothing at all if something is broken), this easy fix should have a high impact on being indexed.

The field in question was the canonical URL. It is part of the meta tags in the HTML header section.

{ "url": "https://blog.bmarwell.de2025/12/30/how-i-got-deindexed-by-google.html" }{ "url": "https://blog.bmarwell.de/2025/12/30/how-i-got-deindexed-by-google.html" }I mean, look at it this way: The owner can’t even produce a valid URI — what is Google to think of that? This certainly hurts page authority.

mainEntityOfPage Pointed at the Homepage

Then I discovered that the field mainEntityOfPage was hardcoded to the blog’s homepage. This is terribly wrong, because the article is not a part of the homepage, but of the BlogPost page. The correct description can be found schema.org/BlogPosting.

With the schema-to-page association broken, no wonder Google did not index individual blog posts anymore!

It is now set to the individual slug URL, e.g.:

mainEntityOfPage for BlogPosting.{

"@context": "https://schema.org",

"@type": "BlogPosting",

"url": "https://blog.bmarwell.de/2025/12/30/how-i-got-deindexed-by-google.html",

"dateCreated": "2025-12-30T00:00:00Z",

"dateModified": "2025-12-30T12:00:00Z",

"inLanguage": "en-GB",

"mainEntityOfPage": {

"@type": "WebPage",

"@id": "https://blog.bmarwell.de/2025/12/30/how-i-got-deindexed-by-google.html"

}

}Relative URLs Are Invalid in JSON-LD

Another issue I discovered in both the meta tags and the JSON-LD markup was the usage of relative paths. Some argue it doesn’t matter for Google — that Google appends the host name correctly. Others recommend absolute URIs to be safe.

My URLs were not just relative — they were ../../ style paths, which are a different problem entirely. Those are genuinely rejected. Whatever your view on simple relative paths, deeply relative paths like that are never safe in JSON-LD.

Twitter Card: property= vs name=

Looking further at validator outputs, e.g. using the Twitter Card Validator, I found out I used the wrong attribute names for my Twitter Cards (now »X cards«).

<meta property="twitter:image" content="..." /> <meta name="twitter:image" content="..." />I had to make sure to use name= parameters for twitter/X cards, not property= — those are used in more generic HTML meta tags only.

OpenGraph Profile Namespace Violation

I removed both profile:first_name and profile:last_name from blog posts. Those attributes are only valid with og:type=profile. The linter flags this as an error.

Sitemap vs noindex: Conflicting Signals

The mostly unused and unlinked archive pages from my blog were appearing in the sitemap. However, I marked them as noindex,follow to just give some hints about the article pages, but mark them as not having proper content of their own.

Since it appeared in the sitemap of blog.bmarwell.de, this sent a mixed signal: Index it (according to sitemap.xml), do not index it (according to meta tags).

A closer look also revealed I was using a wrong date: the »first published« date, not the updated date (which is :jbake-updated:). So, Google never knew a post had been updated.

Heading Hierarchy: Two Competing <h1> Elements

This is a fundamental beginner’s mistake: Never have two primary headings on one page. I had those on the tag pages.

While I do not let Google index them, this still sends a negative signal to bots parsing the heading hierarchy.

Now the sidebar falls back to h3 on tag pages to let the tag page title be the only heading on those pages.

<h1>blog.bmarwell.de</h1> <!-- sidebar site title -->

...

<h1>Tag: Java</h1> <!-- page heading -->This also helps with accessibility, something I do not want to miss out in any case.

Author Identity: Telling Google Who You Are

Fixing the schema structure was one thing. But Google also needs to know who wrote those pages — and that link was broken too.

The Person schema in my microdata had a name and a job title, but no url field. Without a URL, Google cannot connect the author name to a real entity in its Knowledge Graph. A name alone is just a string — it could be anyone called »Benjamin Marwell«.

Worse, when I finally added the URL, I noticed it was pointing to https://www.bmarwell.de/ — with a www prefix. The canonical URL is https://bmarwell.de/ (no www). Those are two different identifiers for Google’s entity resolution, and I had been pointing at the wrong one all along.

Beyond the schema block, there is a mechanism called IndieWeb rel=me. <link rel="me"> elements in the document <head> let identity verifiers — including Mastodon — cross-reference your profile across platforms. My sidebar already emitted <a rel="me"> for each social profile, but the <head> did not. Adding them there means every single page on this blog now asserts the same identity, not just the sidebar widget.

<link rel="me" href="https://github.com/bmarwell" />

<link rel="me" href="https://fosstodon.org/@bmarwell" />

<link rel="me" href="https://bmarwell.de/" />Two smaller fixes rounded this out. itemprop="image" was missing from the BlogPosting microdata block entirely. And structured data date fields were emitted without a UTC timezone suffix — 2025-12-30T12:00:00 instead of 2025-12-30T12:00:00Z. Timezone-naive timestamps are technically ambiguous and flagged as warnings by the Schema Markup Validator.

The Authority Network: Cross-Domain Trust

Fixing what my own blog says about its author only goes so far. Google also weighs what the rest of the web says about you — and that picture was equally thin. To build that external trust, I revived and reworked two domains.

My previous orchestra is the symphonic wind band of Schaumburg. By reviving this website, I was able to include a »maintained by« link, which aids an identity cluster Google is probably going to build in the background. But honestly, I always wanted to bring the site up and running again anyway. This time was as good as any to do that.

The other "power move" a webmaster can do is to redirect traffic and "link authority" from their own top level domain. I completely reworked bmarwell.de to be a landing page about myself, add some rel=me links to social networks like LinkedIn and GitHub, and link a blog article.

Then, just a month ago at time of writing, my Local JUG from Hanover re-acquired jug-h.de. This was a great opportunity to test out JSON-LD data over there as well, implement a similar design for my local JUG and link some speakers, as well as me as the current maintainer.

Some of those domains have been around for two decades. Links from those pages are good for domain authority!

For example, sbo-schaumburg.de was registered in 2003 or earlier, as the Wayback Machine shows.

The first capture of jug-h.de on archive.org was in April 2014. Good enough!

My domain (bmarwell.de) itself actually dates back to at least 2010 or earlier, as the 2010 Wayback Machine capture shows.

What to Expect: The S-Curve of Re-Indexing

I asked GitHub Copilot what to expect after all these fixes. Here is the interview.

Copilot’s answers below are not based on any privileged access to Google’s systems. Like any language model, it recognises patterns in the documentation, community knowledge, and case studies it was trained on — and synthesises them into predictions. Those predictions are well-sourced, but they are still predictions. Take them as informed extrapolation, not inside knowledge. |

Me: I just pushed all the fixes. How long until I see results in Google Search Console?

Don’t expect results overnight. Google’s own documentation states that re-crawling after a fix takes "a few days to a few weeks" per URL — and for a blog with 800+ pages, crawl budget means that queue stretches further still. The typical pattern is an S-curve: a slow start over the first few weeks as Googlebot works through the backlog, then an acceleration once the entity graph updates and trust starts propagating, then a plateau at the new baseline. Realistically, you are looking at weeks to months, not days.

Me: What exactly is an S-curve? Why not linear?

Think of it like compound interest. The first pages Googlebot re-crawls are isolated — they get re-evaluated on their own merit, which moves the needle slowly. But as more fixed pages accumulate, Google’s structured data layer starts connecting them: the author identity resolves, the cross-domain links reinforce each other, the schema blocks corroborate instead of contradict. That network effect accelerates re-indexing disproportionately. Then it flattens again once the "easy wins" are exhausted and only the long-tail pages remain. That is the S-shape: slow, fast, slow.

Me: What should I actually watch for in GSC to know it’s working?

Three signals in order of importance. First, watch the "Crawled – currently not indexed" count in the Page Indexing report: it should start dropping as pages graduate to "Indexed". Second, watch the same report for any new error categories appearing — you want that list to shrink, not grow. Third, watch impressions in the Performance report: impressions recover before clicks, so a rising impressions line is the first real proof that pages are appearing in results again. If all three are moving in the right direction after four to six weeks, the fixes are working.

Me: Is there any guarantee this actually works?

No. Google’s own SEO Starter Guide is explicit: no one can guarantee a ranking or indexing outcome, including Google itself. What you have done is removed every known contradictory or broken signal from your markup. You have given Google clean, consistent, spec-compliant data to work with. That is the maximum a site owner can do. Whether Google acts on it — and how quickly — is entirely its decision.

I will update this section once the data comes in.

Lessons Learned

Key takeaway: Keeping a blog up to date, technically, is a lot of work. When I decided to add JSON-LD and schema.org markup, it was for better visibility. Little did I know it could backfire that much!

So, what I actually learned, split up into individual lessons:

The schema is working like a binary switch: Either it is on (valid) or off (invalid).

Relative URLs in JSON-LD are always invalid — they are silently rejected, not corrected. Use absolute URLs, full stop.

noindexand sitemap entries are mutually exclusive. Pick one signal per page.Heading hierarchy still matters (who knew…).

Meta tag attribute names matter: OGP uses

property=, Twitter Cards usename=. Mixing them up means the card never renders.Identity anchoring is a thing — Google calls it E-E-A-T: Experience, Expertise, Authoritativeness and Trustworthiness.

Contradictory signals are the hardest bugs to find because nothing looks broken on the rendered page.

Conclusion

Since the first article, I worked through performance, content quality, semantic HTML — and still hit a wall. The bugs documented here were invisible to any human reader, and invisible to DuckDuckGo. Only Google cared, because only Google was trying to resolve my pages as entities in its Knowledge Graph.

The fixes themselves were not complicated. A missing slash, a wrong attribute name, a field pointing at the homepage instead of the article. Each one individually is a beginner’s mistake. Together, they were enough to keep 800 pages out of the index for years.

The engine is now primed — or at least it should be. Whether Google agrees is still an open question. I will write a follow-up once the S-curve completes and there is actual GSC data to show.

If you are stuck in the same »Crawled – currently not indexed« purgatory, start with the Rich Results Test and the Schema Markup Validator. There is a good chance something in your markup is silently broken too.